Summary

On August 2nd, 2022, a cross-chain bridge named Nomad Bridge was attacked, leading to the loss of nearly $200M. The root cause is the incorrect check in the upgraded version of the on-chain smart contract.

The Background

Nomad Bridge is an emerging cross-chain asset bridge that uses a fraud-proof based design. It works as follows:

- Nomad deploys a core contract named Replica on each supported blockchain as the mailbox for any cross-chain messages.

- Off-chain agents relay and arrange cross-chain messages in a Merkle tree and update tree root by posting signed new tree root hash to this contract.

- New messages that need to be confirmed on-chain must go through both the

prove()and theprocess()procedure.- The

prove()procedure verifies the message and the proof in the Merkle tree, then marks the message as proven. - The

process()procedure checks and executes the message if the message is previously proven, and the associated tree root is confirmed.

- The

The Code

In Ethereum, the Replica is a Beacon proxy deployed at 0x5d94309e5a0090b165fa4181519701637b6daeba. There are two versions of the logical contract, the first version deployed at 0x7f58bb8311db968ab110889f2dfa04ab7e8e831b, and the second version is deployed at 0xb92336759618f55bd0f8313bd843604592e27bd8.

We first check the previous version of the logical contract, specifically, the process() function:

function process(bytes memory _message) public returns (bool _success) {

bytes29 _m = _message.ref(0);

// ensure message was meant for this domain

require(_m.destination() == localDomain, "!destination");

// ensure message has been proven

bytes32 _messageHash = _m.keccak();

require(messages[_messageHash] == MessageStatus.Proven, "!proven");

// check re-entrancy guard

require(entered == 1, "!reentrant");

entered = 0;

// update message status as processed

messages[_messageHash] = MessageStatus.Processed;We only show a part of this function. In this code segment, the message hash is calculated, and the hash is checked against the messages mapping to ensure this message has previously proved, then the reentrancy check, and then update the message status.

We also briefly review the old prove() function:

function prove(

bytes32 _leaf,

bytes32[32] calldata _proof,

uint256 _index

) public returns (bool) {

// ensure that message has not been proven or processed

require(messages[_leaf] == MessageStatus.None, "!MessageStatus.None");

// calculate the expected root based on the proof

bytes32 _calculatedRoot = MerkleLib.branchRoot(_leaf, _proof, _index);

// if the root is valid, change status to Proven

if (acceptableRoot(_calculatedRoot)) {

messages[_leaf] = MessageStatus.Proven;

return true;

}

return false;

}Nothing special here: duplication check, calculate the tree root, if acceptable, then mark proven. So in the old version of the Replica contract, there is a special mark (MessageStatus.Proven = 1) for all messages that are proven.

Then let's check the second version of the logical contract. For the new version we first check the prove() function:

function prove(

bytes32 _leaf,

bytes32[32] calldata _proof,

uint256 _index

) public returns (bool) {

// ensure that message has not been processed

// Note that this allows re-proving under a new root.

require(

messages[_leaf] != LEGACY_STATUS_PROCESSED,

"already processed"

);

// calculate the expected root based on the proof

bytes32 _calculatedRoot = MerkleLib.branchRoot(_leaf, _proof, _index);

// if the root is valid, change status to Proven

if (acceptableRoot(_calculatedRoot)) {

messages[_leaf] = _calculatedRoot;

return true;

}

return false;

}We immediately noticed a major change here: for some reason, the developers decided to record the calculated root as the proven status instead of a special mark. For this function it is okay because the Merkle tree root hash is ensured to be not zero. It is also reasonable because as soon as the tree root is confirmed, any new messages proven with this tree root are ready to be executed.

Then we check the process() function in the new version:

function process(bytes memory _message) public returns (bool _success) {

// ensure message was meant for this domain

bytes29 _m = _message.ref(0);

require(_m.destination() == localDomain, "!destination");

// ensure message has been proven

bytes32 _messageHash = _m.keccak();

require(acceptableRoot(messages[_messageHash]), "!proven");

// check re-entrancy guard

require(entered == 1, "!reentrant");

entered = 0;

// update message status as processed

messages[_messageHash] = LEGACY_STATUS_PROCESSED;We notice the messages[_messageHash] line. It is a common pitfall that retrieving a non-existent mapping entry returns zero. In this context it means that the Merkle tree root associated with this message hash is zero. We need to further check the result of this zero. So we should carefully check the new acceptableRoot() function.

function acceptableRoot(bytes32 _root) public view returns (bool) {

// this is backwards-compatibility for messages proven/processed

// under previous versions

if (_root == LEGACY_STATUS_PROVEN) return true;

if (_root == LEGACY_STATUS_PROCESSED) return false;

uint256 _time = confirmAt[_root];

if (_time == 0) {

return false;

}

return block.timestamp >= _time;

}Basically this function checks the confirmAt mapping to check if the Merkle tree root has been confirmed.

Unfortunately, in BOTH version of the Replica contract, the zero hash is set to 1 in the initializer:

function initialize(

uint32 _remoteDomain,

address _updater,

bytes32 _committedRoot, // this is zero at initialization

uint256 _optimisticSeconds

) public initializer {

__NomadBase_initialize(_updater);

// set storage variables

entered = 1;

remoteDomain = _remoteDomain;

committedRoot = _committedRoot;

// pre-approve the committed root.

confirmAt[_committedRoot] = 1;

_setOptimisticTimeout(_optimisticSeconds);

}In the old version of the Replica contract this is totally fine: in prove() no tree root hash can be zero so it is safe to set the zero hash entry to 1 in the confirmAt mapping.

In the new version, however, for a new message the messages[_messageHash] returns zero. Then acceptableRoot will access the zero hash entry in the confirmAt mapping, and then return true.

The Attack

From the code analysis above, we know that any previously unseen message can just pass through the validation logic and get executed. So just forge a message and call process().

Interestingly, the first call to the process() function in this contract is just two days ago (at block 15249565) in 0xa654fd4152f4734fcd774dd64b618b22a1561e2528b7b8e4500d20edb05b3ba0.

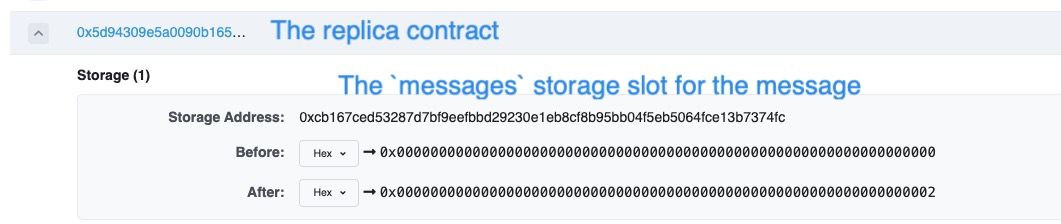

In the following figure, we can see that the storage slot for the messages state variable for this message was originally zero, meaning that the Replica contract did not know this message previously.

Then this slot was set to two (i.e. the LEGACY_STATUS_PROCESSED status meaning that this message has been processed. This indicates that an invalid message has bypassed the prove() logic and processed directly.

Conclusion

This is another classical attack exploiting the unchecked return value retrieved from a mapping. Solidity developers should pay special attention when dealing with mappings to avoid unexpected results.